Research InterestsHow do we interact with the world around us? One way we do so is via our interaction with other people, which makes us different from most of other animals. The other way is via our interaction with the space, which connects us to most of other animals. We are interested in understanding both aspects of human-environment interaction, and our research focuses specifically on person perception and spatial navigation.

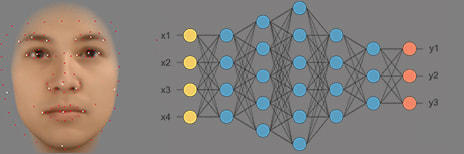

We use psychophysics, virtual environment, eye-movement tracking, computational modelling, and functional MRI to investigate how we process, and respond accordingly to, person- and space-related information in mind, body and brain. Research ProjectsFace Processing in Mind, Brain, and Machine

Are human and model-based face processing similar? What makes machine processing faces (not) like us? Holistic Processing of Faces and Nonface Objects Why is face processing (not) special? What determines our face recognition ability? Navigation in Real and Virtual Environment How do we use various types of information for navigation? What determines our sense of direction and navigation ability? Neural Mechanisms of Recognition and Navigation How are facial and spatial information coded in the brain? How are recognition and navigation implemented in the brain?

Spatial Ability: Clinical Relevance and Educational Implications Is spatial ability a cognitive marker to neurodegenerative diseases? Is spatial ability a reliable predictor of STEM performance? Other-Race Effect (ORE) in Face Memory Why other-race faces are difficult to differentiate? How to reduce such difficulty in eyewitness memory? |

Laura's PhD thesis examined how we perceive multiple dimensions of emotions in facial expressions and what factors contribute to our perceived similarity between different facial emoitions.

Computational models trained for sex-categorisation can achieve equivalent performance to humans, however, they are differently affected by subtle variation of faces, suggesting that they rely on a different mechanism from that employed by humans.

The award will support our research on what cognitive and neural processes determines our face recognition ability.

Some objects, including human faces and bodies, have an intrinsic facing/heading direction. How do we encode such orientation information in the brain? Here we show a stimulus-independent neural coding of face and body orientation in the occipitotemporal cortex.

Laura has investigated perceptual similarity between facial emotions in Asian and European people and tested what drives the similarity/differences between their perceptual similarity.

Laura has investigated perceptual similarity between facial emotions in categorical and profiling responses and tested what drives our perceptual similarity.

Averaging more and more faces to create a face average not only changes the physical appearance of the resulting faces but also affects how we see and recognise those faces.

Body-based person identity can be decoded across viewpoint in the fusiform body area and right anterior temporal cortex, and it can be decoded from neural activity elicited by the same person's faces.

Laura has investigated perceptual similarity between facial emotions in static and dynamic faces and tested what drives our perceptual similarity.

|

Word cloud generated using the abstract of all our publications